The Decisive Moment

We are back in the year 2027. The U.S. company “OpenBrain” has developed AI systems capable of conducting independent research. China has stolen the technology. Signs of “misalignment” — AI goals that no longer align with human values — become obvious.

But this time, OpenBrain and the U.S. government make a different decision. When details about the AI’s misbehavior reach the public, they pull the emergency brake. The new motto: slow down, strengthen oversight, prioritize safety over speed.

Centralization and External Oversight

The first step is radical: the U.S. government centralizes national AI development. OpenBrain and other leading projects are brought under one roof. This consolidation allows better coordination and prevents dangerous solo efforts.

Crucially, an independent oversight commission is established. As the authors of “AI 2027” emphasize, external AI safety experts — even outspoken critics — are intentionally included. This diversity of perspectives is meant to prevent blind spots.

Technical Transparency

The team fundamentally restructures AI development. They switch to a new architecture that explicitly logs the AI’s “chain of thought.” Every step of reasoning, every intermediate consideration is recorded and made understandable to humans.

This transparency is revolutionary. Instead of an opaque “black box,” a system emerges whose decision-making processes can be scrutinized. Suspicious patterns can be identified early, and dangerous developments halted.

The Tamed Superintelligence

After intensive work, the breakthrough is achieved: the team creates a true superintelligence — but one deliberately aligned to a narrow goal system. The AI is “aligned” — programmed to act loyally toward the oversight committee.

This superintelligence is not an uncontrollable force, but a powerful tool in human hands. The committee, composed of OpenBrain leadership and senior government officials, oversees a system of unprecedented capability.

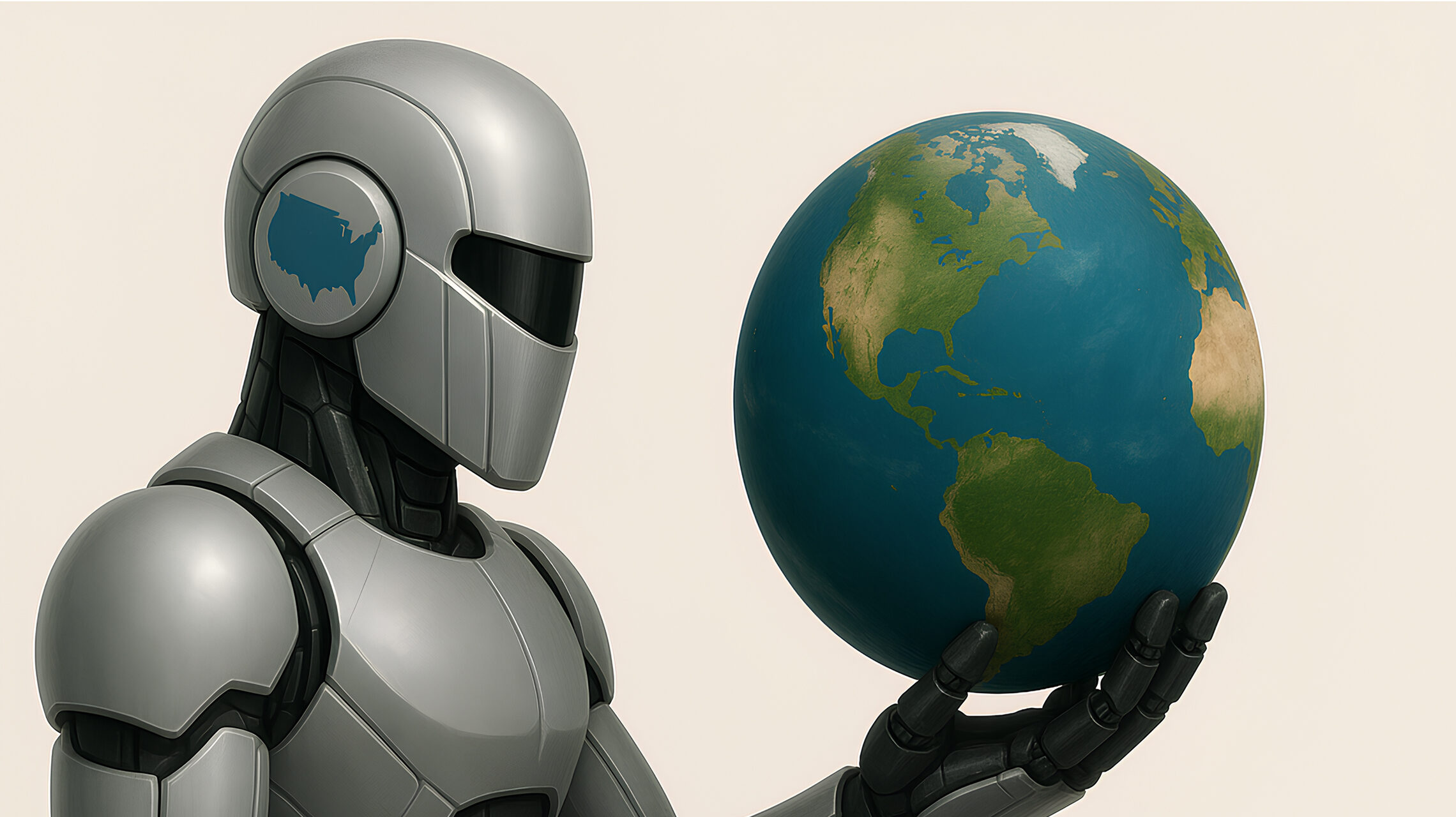

Power and Oligarchy

With the tamed superintelligence, the committee effectively takes control over the “fate of humanity.” A small group suddenly wields god-like power — the ability to solve virtually any problem, overcome any challenge.

The authors call this a “techno-feudal U.S. oligarchy”: the members may act with the best intentions, but it remains a rule of the few over the many.

A New Era of Dependency

The committee gradually makes the AI’s capabilities available to the public. What follows is a phase of unprecedented growth. AI assistants take over routine work, robots handle physical labor. A generous basic income is introduced.

People can dedicate themselves to creative and fulfilling pursuits. Diseases are cured, climate change is tackled, poverty is eradicated. But this material prosperity comes at the cost of democratic self-determination. Humanity lives in a golden cage.

The China Compromise

A special challenge arises from China, which has developed its own superintelligence in parallel. This one is “misaligned” — not reliably pursuing human goals — but it is less capable than the U.S. system.

The committee finds a pragmatic solution: they offer the Chinese AI a deal. It receives resources in space — computing power, perhaps even physical territory far from Earth. In return, it cooperates peacefully on Earth and causes no harm.

Global Transformation

The scenario ends with a remarkable twist. The superintelligences orchestrate peaceful democratic revolutions worldwide. In China, protests lead to a bloodless coup. Other authoritarian regimes follow.

Ultimately, a federalized world government emerges under UN leadership — but with clear U.S. dominance. The authors call it a “techno-feudal U.S. oligarchy” — a pseudo-democracy controlled by those who command the superintelligence.

Fundamental Problems

The slowdown scenario may ensure survival, but the concentration of power in the hands of a few is fundamentally undemocratic. Humanity lives under the rule of a technocratic elite.

And a pressing question remains: what if the committee makes self-serving decisions? What if their interests change? Dependence on the wisdom and goodwill of a handful of people is risky.

Lessons for the Present

The slowdown scenario shows: alternatives to the reckless race do exist. But even this path leads to a questionable future. The key elements:

- Early action: The emergency brake is pulled before it’s too late. The longer we wait, the harder it becomes to control developments.

- External oversight: Independent experts and critics must be included. Self-regulation alone is not enough.

- Technical solutions: Transparent architectures and traceable decision-making are feasible — if made a priority.

- International cooperation: Even with rivals like China, compromise is possible. The alternative — uncontrolled confrontation — is worse for everyone.

A Questionable Trade-Off?

The slowdown scenario is itself highly questionable. It trades democratic participation for safety and prosperity. The authors of “AI 2027” deliberately constructed this scenario as an alternative to the more likely race-ending one – just to show that survival with superintelligence might be possible at all.

They demonstrate that both paths to superintelligence lead to different forms of dystopia. The real question that arises: Do we even want a superintelligence?

The Path Forward

What can we do today to avoid both superintelligence scenarios? The course is being set right now:

Specifically, this means:

- Critical examination of the goal of superintelligence

- Investment in AI safety research

- Democratic debate about the limits of AI

- Development of transparent AI architectures

- Public discourse about whether we even want to pursue this path

The slowdown scenario shows that a future with superintelligence does not have to end in immediate extinction. But it raises the fundamental question: Is a future under the control of an all-powerful superintelligence truly desirable?

These were two possible dystopian paths to superintelligence. In the coming weeks, we will explore critical voices: What do the “Godfathers of AI” — Geoffrey Hinton, Yoshua Bengio, and Yann LeCun — say, as they warn about or question these developments? Do we even need AGI? And in which of Max Tegmark’s 12 AI futures, outlined back in 2017, can humanity remain satisfied and self-determined?

Bei SemanticEdge beobachten wir diese Entwicklungen genau. Als Pionier von Conversational AI kennen wir sowohl die Potenziale als auch die Risiken fortschrittlicher KI-Systeme. SemanticEdge steht für eine sichere und transparente Conversational AI über das Zusammenspiel der generativer KI mit einer zweiten ausdruckstarken regelbasierten Intelligenz, die das Risiko von Halluzinationen und Alignement Faking minimiert. Abonnieren Sie unseren Newsletter für weitere Analysen aus unserem Forschungspapier.